time=“2026-02-27T03:13:01Z” level=info msg=“Starting k3s v1.34.1+k3s1 (24fc436e)”

time=“2026-02-27T03:13:01Z” level=info msg=“Managed etcd cluster bootstrap already complete and initialized”

time=“2026-02-27T03:13:01Z” level=info msg=“Starting temporary etcd to reconcile with datastore”

{“level”:“info”,“ts”:“2026-02-27T03:13:01.884688Z”,“caller”:“embed/etcd.go:132”,“msg”:“configuring socket options”,“reuse-address”:true,“reuse-port”:true}

{“level”:“info”,“ts”:“2026-02-27T03:13:01.884775Z”,“caller”:“embed/etcd.go:138”,“msg”:“configuring peer listeners”,“listen-peer-urls”:[“http://127.0.0.1:2400”]}

{“level”:“info”,“ts”:“2026-02-27T03:13:01.885106Z”,“caller”:“embed/etcd.go:146”,“msg”:“configuring client listeners”,“listen-client-urls”:[“http://127.0.0.1:2399”]}

{“level”:“info”,“ts”:“2026-02-27T03:13:01.885259Z”,“caller”:“embed/etcd.go:323”,“msg”:“starting an etcd server”,“etcd-version”:“3.6.4”,“git-sha”:“HEAD”,“go-version”:“go1.24.6”,“go-os”:“linux”,“go-arch”:“amd64”,“max-cpu-set”:80,“max-cpu-available”:80,“member-initialized”:true,“name”:“96515ca796bf-f0affc01”,“data-dir”:“/var/lib/rancher/k3s/server/db/etcd-tmp”,“wal-dir”:“”,“wal-dir-dedicated”:“”,“member-dir”:“/var/lib/rancher/k3s/server/db/etcd-tmp/member”,“force-new-cluster”:true,“heartbeat-interval”:“500ms”,“election-timeout”:“5s”,“initial-election-tick-advance”:true,“snapshot-count”:10000,“max-wals”:0,“max-snapshots”:0,“snapshot-catchup-entries”:5000,“initial-advertise-peer-urls”:[“http://127.0.0.1:2400”],“listen-peer-urls”:[“http://127.0.0.1:2400”],“advertise-client-urls”:[“http://127.0.0.1:2399”],“listen-client-urls”:[“http://127.0.0.1:2399”],“listen-metrics-urls”:,“experimental-local-address”:“”,“cors”:[““],“host-whitelist”:[””],“initial-cluster”:“”,“initial-cluster-state”:“new”,“initial-cluster-token”:“”,“quota-backend-bytes”:2147483648,“max-request-bytes”:1572864,“max-concurrent-streams”:4294967295,“pre-vote”:true,“feature-gates”:“InitialCorruptCheck=true”,“initial-corrupt-check”:false,“corrupt-check-time-interval”:“0s”,“compact-check-time-interval”:“1m0s”,“auto-compaction-mode”:“periodic”,“auto-compaction-retention”:“0s”,“auto-compaction-interval”:“0s”,“discovery-url”:“”,“discovery-proxy”:“”,“discovery-token”:“”,“discovery-endpoints”:“”,“discovery-dial-timeout”:“2s”,“discovery-request-timeout”:“5s”,“discovery-keepalive-time”:“2s”,“discovery-keepalive-timeout”:“6s”,“discovery-insecure-transport”:true,“discovery-insecure-skip-tls-verify”:false,“discovery-cert”:“”,“discovery-key”:“”,“discovery-cacert”:“”,“discovery-user”:“”,“downgrade-check-interval”:“5s”,“max-learners”:1,“v2-deprecation”:“write-only”}

{“level”:“info”,“ts”:“2026-02-27T03:13:01.885665Z”,“logger”:“bbolt”,“caller”:“backend/backend.go:203”,“msg”:“Opening db file (/var/lib/rancher/k3s/server/db/etcd-tmp/member/snap/db) with mode -rw------- and with options: {Timeout: 0s, NoGrowSync: false, NoFreelistSync: true, PreLoadFreelist: false, FreelistType: hashmap, ReadOnly: false, MmapFlags: 8000, InitialMmapSize: 10737418240, PageSize: 0, NoSync: false, OpenFile: 0x0, Mlock: false, Logger: 0xc000c81a40}”}

{“level”:“info”,“ts”:“2026-02-27T03:13:01.889009Z”,“logger”:“bbolt”,“caller”:“bbolt@v1.4.2/db.go:321”,“msg”:“Opening bbolt db (/var/lib/rancher/k3s/server/db/etcd-tmp/member/snap/db) successfully”}

{“level”:“info”,“ts”:“2026-02-27T03:13:01.889062Z”,“caller”:“storage/backend.go:80”,“msg”:“opened backend db”,“path”:“/var/lib/rancher/k3s/server/db/etcd-tmp/member/snap/db”,“took”:“3.527115ms”}

{“level”:“info”,“ts”:“2026-02-27T03:13:01.889098Z”,“caller”:“etcdserver/bootstrap.go:220”,“msg”:“restore consistentIndex”,“index”:391962}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.164429Z”,“caller”:“etcdserver/bootstrap.go:413”,“msg”:“recovered v2 store from snapshot”,“snapshot-index”:390046,“snapshot-size”:“7.1 kB”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.164569Z”,“caller”:“storage/backend.go:108”,“msg”:“Skipping snapshot backend”,“consistent-index”:391962,“snapshot-index”:390046}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.164610Z”,“caller”:“etcdserver/bootstrap.go:232”,“msg”:“recovered v3 backend”,“backend-size-bytes”:10260480,“backend-size”:“10 MB”,“backend-size-in-use-bytes”:10248192,“backend-size-in-use”:“10 MB”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.164830Z”,“caller”:“etcdserver/bootstrap.go:90”,“msg”:“Bootstrapping WAL from snapshot”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.455625Z”,“caller”:“etcdserver/bootstrap.go:591”,“msg”:“forcing restart member”,“cluster-id”:“ffa3ef52f8ea6d01”,“local-member-id”:“51be9e926333dcd0”,“wal-commit-index”:391962,“commit-index”:391962}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.455829Z”,“caller”:“etcdserver/bootstrap.go:94”,“msg”:“bootstrapping cluster”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.456043Z”,“caller”:“etcdserver/bootstrap.go:101”,“msg”:“bootstrapping storage”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.456471Z”,“caller”:“api/capability.go:76”,“msg”:“enabled capabilities for version”,“cluster-version”:“3.6”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.456505Z”,“caller”:“membership/cluster.go:297”,“msg”:“recovered/added member from store”,“cluster-id”:“ffa3ef52f8ea6d01”,“local-member-id”:“51be9e926333dcd0”,“recovered-remote-peer-id”:“51be9e926333dcd0”,“recovered-remote-peer-urls”:[“https://10.88.0.39:2380”],“recovered-remote-peer-is-learner”:false}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.456534Z”,“caller”:“membership/cluster.go:307”,“msg”:“set cluster version from store”,“cluster-version”:“3.6”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.456566Z”,“caller”:“etcdserver/bootstrap.go:109”,“msg”:“bootstrapping raft”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.456680Z”,“caller”:“etcdserver/server.go:312”,“msg”:“bootstrap successfully”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.456818Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:1981”,“msg”:“51be9e926333dcd0 switched to configuration voters=(5890319714213944528)”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.456905Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:897”,“msg”:“51be9e926333dcd0 became follower at term 3”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.456938Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:493”,“msg”:“newRaft 51be9e926333dcd0 [peers: [51be9e926333dcd0], term: 3, commit: 391962, applied: 390046, lastindex: 391962, lastterm: 3]”}

{“level”:“warn”,“ts”:“2026-02-27T03:13:03.457879Z”,“caller”:“auth/store.go:1135”,“msg”:“simple token is not cryptographically signed”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.458217Z”,“caller”:“mvcc/kvstore.go:334”,“msg”:“restored last compact revision”,“meta-bucket-name-key”:“finishedCompactRev”,“restored-compact-revision”:374762}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.479901Z”,“caller”:“mvcc/kvstore.go:408”,“msg”:“kvstore restored”,“current-rev”:377989}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.481069Z”,“caller”:“storage/quota.go:93”,“msg”:“enabled backend quota with default value”,“quota-name”:“v3-applier”,“quota-size-bytes”:2147483648,“quota-size”:“2.1 GB”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.481145Z”,“caller”:“etcdserver/corrupt.go:91”,“msg”:“starting initial corruption check”,“local-member-id”:“51be9e926333dcd0”,“timeout”:“15s”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.485766Z”,“caller”:“etcdserver/corrupt.go:172”,“msg”:“initial corruption checking passed; no corruption”,“local-member-id”:“51be9e926333dcd0”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.485853Z”,“caller”:“etcdserver/server.go:589”,“msg”:“starting etcd server”,“local-member-id”:“51be9e926333dcd0”,“local-server-version”:“3.6.4”,“cluster-id”:“ffa3ef52f8ea6d01”,“cluster-version”:“3.6”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.486135Z”,“caller”:“etcdserver/server.go:483”,“msg”:“started as single-node; fast-forwarding election ticks”,“local-member-id”:“51be9e926333dcd0”,“forward-ticks”:9,“forward-duration”:“4.5s”,“election-ticks”:10,“election-timeout”:“5s”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.486369Z”,“caller”:“embed/etcd.go:292”,“msg”:“now serving peer/client/metrics”,“local-member-id”:“51be9e926333dcd0”,“initial-advertise-peer-urls”:[“http://127.0.0.1:2400”],“listen-peer-urls”:[“http://127.0.0.1:2400”],“advertise-client-urls”:[“http://127.0.0.1:2399”],“listen-client-urls”:[“http://127.0.0.1:2399”],“listen-metrics-urls”:}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.486260Z”,“caller”:“embed/etcd.go:640”,“msg”:“serving peer traffic”,“address”:“127.0.0.1:2400”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.486469Z”,“caller”:“embed/etcd.go:611”,“msg”:“cmux::serve”,“address”:“127.0.0.1:2400”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.503434Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:1981”,“msg”:“51be9e926333dcd0 switched to configuration voters=(5890319714213944528)”}

{“level”:“info”,“ts”:“2026-02-27T03:13:03.503616Z”,“caller”:“membership/cluster.go:650”,“msg”:“ignored already updated member”,“cluster-id”:“ffa3ef52f8ea6d01”,“local-member-id”:“51be9e926333dcd0”,“updated-remote-peer-id”:“51be9e926333dcd0”,“updated-remote-peer-urls”:[“https://10.88.0.39:2380”],“updated-remote-peer-is-learner”:false}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.957913Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:988”,“msg”:“51be9e926333dcd0 is starting a new election at term 3”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.957997Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:930”,“msg”:“51be9e926333dcd0 became pre-candidate at term 3”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.958092Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:1077”,“msg”:“51be9e926333dcd0 received MsgPreVoteResp from 51be9e926333dcd0 at term 3”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.958124Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:1693”,“msg”:“51be9e926333dcd0 has received 1 MsgPreVoteResp votes and 0 vote rejections”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.958161Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:912”,“msg”:“51be9e926333dcd0 became candidate at term 4”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.995353Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:1077”,“msg”:“51be9e926333dcd0 received MsgVoteResp from 51be9e926333dcd0 at term 4”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.995397Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:1693”,“msg”:“51be9e926333dcd0 has received 1 MsgVoteResp votes and 0 vote rejections”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.995417Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/raft.go:970”,“msg”:“51be9e926333dcd0 became leader at term 4”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.995428Z”,“logger”:“raft”,“caller”:“v3@v3.6.0/node.go:370”,“msg”:“raft.node: 51be9e926333dcd0 elected leader 51be9e926333dcd0 at term 4”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.995761Z”,“caller”:“etcdserver/server.go:1806”,“msg”:“published local member to cluster through raft”,“local-member-id”:“51be9e926333dcd0”,“local-member-attributes”:“{Name:96515ca796bf-f0affc01 ClientURLs:[http://127.0.0.1:2399]}”,“cluster-id”:“ffa3ef52f8ea6d01”,“publish-timeout”:“15s”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.995804Z”,“caller”:“embed/serve.go:138”,“msg”:“ready to serve client requests”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.995802Z”,“caller”:“embed/serve.go:138”,“msg”:“ready to serve client requests”}

{“level”:“warn”,“ts”:“2026-02-27T03:13:06.996897Z”,“caller”:“v3rpc/grpc.go:52”,“msg”:“etcdserver: failed to register grpc metrics”,“error”:“descriptor Desc{fqName: "grpc_server_msg_sent_total", help: "Total number of gRPC stream messages sent by the server.", constLabels: {}, variableLabels: {grpc_type,grpc_service,grpc_method}} already exists with the same fully-qualified name and const label values”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.996929Z”,“caller”:“embed/serve.go:220”,“msg”:“serving client traffic insecurely; this is strongly discouraged!”,“traffic”:“http”,“address”:“127.0.0.1:2402”}

{“level”:“info”,“ts”:“2026-02-27T03:13:06.997276Z”,“caller”:“v3rpc/health.go:63”,“msg”:“grpc service status changed”,“service”:“”,“status”:“SERVING”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.002140Z”,“caller”:“embed/serve.go:220”,“msg”:“serving client traffic insecurely; this is strongly discouraged!”,“traffic”:“grpc”,“address”:“127.0.0.1:2399”}

time=“2026-02-27T03:13:07Z” level=info msg=“Connected to etcd v3.6.4 - datastore using 10248192 of 10260480 bytes”

time=“2026-02-27T03:13:07Z” level=info msg=“Defragmenting etcd database”

{“level”:“info”,“ts”:“2026-02-27T03:13:07.010548Z”,“caller”:“v3rpc/maintenance.go:110”,“msg”:“starting defragment”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.011747Z”,“caller”:“backend/backend.go:522”,“msg”:“defragmenting”,“path”:“/var/lib/rancher/k3s/server/db/etcd-tmp/member/snap/db”,“current-db-size-bytes”:10260480,“current-db-size”:“10 MB”,“current-db-size-in-use-bytes”:10248192,“current-db-size-in-use”:“10 MB”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.212254Z”,“logger”:“bbolt”,“caller”:“backend/backend.go:574”,“msg”:“Opening db file (/var/lib/rancher/k3s/server/db/etcd-tmp/member/snap/db) with mode -rw------- and with options: {Timeout: 0s, NoGrowSync: false, NoFreelistSync: true, PreLoadFreelist: false, FreelistType: hashmap, ReadOnly: false, MmapFlags: 8000, InitialMmapSize: 10737418240, PageSize: 0, NoSync: false, OpenFile: 0x0, Mlock: false, Logger: 0xc000c81a40}”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.220311Z”,“logger”:“bbolt”,“caller”:“bbolt@v1.4.2/db.go:321”,“msg”:“Opening bbolt db (/var/lib/rancher/k3s/server/db/etcd-tmp/member/snap/db) successfully”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.220371Z”,“caller”:“backend/backend.go:592”,“msg”:“finished defragmenting directory”,“path”:“/var/lib/rancher/k3s/server/db/etcd-tmp/member/snap/db”,“current-db-size-bytes-diff”:-8192,“current-db-size-bytes”:10252288,“current-db-size”:“10 MB”,“current-db-size-in-use-bytes-diff”:-4096,“current-db-size-in-use-bytes”:10244096,“current-db-size-in-use”:“10 MB”,“took”:“209.587651ms”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.220404Z”,“caller”:“v3rpc/maintenance.go:118”,“msg”:“finished defragment”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.220419Z”,“caller”:“v3rpc/health.go:63”,“msg”:“grpc service status changed”,“service”:“”,“status”:“SERVING”}

time=“2026-02-27T03:13:07Z” level=info msg=“Datastore using 10244096 of 10252288 bytes after defragment”

time=“2026-02-27T03:13:07Z” level=info msg=“etcd temporary data store connection OK”

time=“2026-02-27T03:13:07Z” level=info msg=“Reconciling bootstrap data between datastore and disk”

time=“2026-02-27T03:13:07Z” level=info msg=“stopping etcd”

{“level”:“info”,“ts”:“2026-02-27T03:13:07.234677Z”,“caller”:“embed/etcd.go:426”,“msg”:“closing etcd server”,“name”:“96515ca796bf-f0affc01”,“data-dir”:“/var/lib/rancher/k3s/server/db/etcd-tmp”,“advertise-peer-urls”:[“http://127.0.0.1:2400”],“advertise-client-urls”:[“http://127.0.0.1:2399”]}

{“level”:“error”,“ts”:“2026-02-27T03:13:07.235483Z”,“caller”:“embed/etcd.go:912”,“msg”:“setting up serving from embedded etcd failed.”,“error”:“http: Server closed”,“stacktrace”:“go.etcd.io/etcd/server/v3/embed.(*Etcd).errHandler\n\t/go/pkg/mod/github.com/k3s-io/etcd/server/v3@v3.6.4-k3s3/embed/etcd.go:912\ngo.etcd.io/etcd/server/v3/embed.(*serveCtx).startHandler.func1\n\t/go/pkg/mod/github.com/k3s-io/etcd/server/v3@v3.6.4-k3s3/embed/serve.go:90”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.235596Z”,“caller”:“etcdserver/server.go:1281”,“msg”:“skipped leadership transfer for single voting member cluster”,“local-member-id”:“51be9e926333dcd0”,“current-leader-member-id”:“51be9e926333dcd0”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.235723Z”,“caller”:“etcdserver/server.go:2321”,“msg”:“server has stopped; stopping cluster version’s monitor”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.235732Z”,“caller”:“etcdserver/server.go:2344”,“msg”:“server has stopped; stopping storage version’s monitor”}

{“level”:“error”,“ts”:“2026-02-27T03:13:07.235755Z”,“caller”:“embed/etcd.go:912”,“msg”:“setting up serving from embedded etcd failed.”,“error”:“accept tcp 127.0.0.1:2402: use of closed network connection”,“stacktrace”:“go.etcd.io/etcd/server/v3/embed.(*Etcd).errHandler\n\t/go/pkg/mod/github.com/k3s-io/etcd/server/v3@v3.6.4-k3s3/embed/etcd.go:912\ngo.etcd.io/etcd/server/v3/embed.(*Etcd).startHandler.func1\n\t/go/pkg/mod/github.com/k3s-io/etcd/server/v3@v3.6.4-k3s3/embed/etcd.go:906”}

time=“2026-02-27T03:13:07Z” level=info msg=“certificate CN=kube-apiserver signed by CN=k3s-server-ca@1772097151: notBefore=2026-02-26 09:12:31 +0000 UTC notAfter=2027-02-27 03:13:07 +0000 UTC”

time=“2026-02-27T03:13:07Z” level=info msg=“certificate CN=etcd-peer signed by CN=etcd-peer-ca@1772097151: notBefore=2026-02-26 09:12:31 +0000 UTC notAfter=2027-02-27 03:13:07 +0000 UTC”

time=“2026-02-27T03:13:07Z” level=info msg=“certificate CN=etcd-server signed by CN=etcd-server-ca@1772097151: notBefore=2026-02-26 09:12:31 +0000 UTC notAfter=2027-02-27 03:13:07 +0000 UTC”

{“level”:“info”,“ts”:“2026-02-27T03:13:07.245690Z”,“caller”:“embed/etcd.go:621”,“msg”:“stopping serving peer traffic”,“address”:“127.0.0.1:2400”}

{“level”:“error”,“ts”:“2026-02-27T03:13:07.245901Z”,“caller”:“embed/etcd.go:912”,“msg”:“setting up serving from embedded etcd failed.”,“error”:“accept tcp 127.0.0.1:2400: use of closed network connection”,“stacktrace”:“go.etcd.io/etcd/server/v3/embed.(*Etcd).errHandler\n\t/go/pkg/mod/github.com/k3s-io/etcd/server/v3@v3.6.4-k3s3/embed/etcd.go:912\ngo.etcd.io/etcd/server/v3/embed.(*Etcd).startHandler.func1\n\t/go/pkg/mod/github.com/k3s-io/etcd/server/v3@v3.6.4-k3s3/embed/etcd.go:906”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.245969Z”,“caller”:“embed/etcd.go:626”,“msg”:“stopped serving peer traffic”,“address”:“127.0.0.1:2400”}

{“level”:“info”,“ts”:“2026-02-27T03:13:07.245991Z”,“caller”:“embed/etcd.go:428”,“msg”:“closed etcd server”,“name”:“96515ca796bf-f0affc01”,“data-dir”:“/var/lib/rancher/k3s/server/db/etcd-tmp”,“advertise-peer-urls”:[“http://127.0.0.1:2400”],“advertise-client-urls”:[“http://127.0.0.1:2399”]}

time=“2026-02-27T03:13:07Z” level=info msg=“certificate CN=k3s,O=k3s signed by CN=k3s-server-ca@1772097151: notBefore=2026-02-26 09:12:31 +0000 UTC notAfter=2027-02-27 03:13:07 +0000 UTC”

time=“2026-02-27T03:13:07Z” level=warning msg=“dynamiclistener [::]:6443: no cached certificate available for preload - deferring certificate load until storage initialization or first client request”

time=“2026-02-27T03:13:07Z” level=info msg=“Active TLS secret / (ver=) (count 10): map[listener.cattle.io/cn-10.43.0.1:10.43.0.1 listener.cattle.io/cn-10.88.0.39:10.88.0.39 listener.cattle.io/cn-127.0.0.1:127.0.0.1 listener.cattle.io/cn-__1-f16284:::1 listener.cattle.io/cn-kubernetes:kubernetes listener.cattle.io/cn-kubernetes.default:kubernetes.default listener.cattle.io/cn-kubernetes.default.svc:kubernetes.default.svc listener.cattle.io/cn-kubernetes.default.svc.cluster.local:kubernetes.default.svc.cluster.local listener.cattle.io/cn-local-node:local-node listener.cattle.io/cn-localhost:localhost listener.cattle.io/fingerprint:SHA1=41E49611D01D03BE0650BC7B6EF701146B7AA183]”

time=“2026-02-27T03:13:08Z” level=info msg=“Password verified locally for node local-node”

time=“2026-02-27T03:13:08Z” level=info msg=“certificate CN=local-node signed by CN=k3s-server-ca@1772097151: notBefore=2026-02-26 09:12:31 +0000 UTC notAfter=2027-02-27 03:13:08 +0000 UTC”

time=“2026-02-27T03:13:09Z” level=info msg=“certificate CN=system:node:local-node,O=system:nodes signed by CN=k3s-client-ca@1772097151: notBefore=2026-02-26 09:12:31 +0000 UTC notAfter=2027-02-27 03:13:09 +0000 UTC”

time=“2026-02-27T03:13:09Z” level=info msg=“certificate CN=system:kube-proxy signed by CN=k3s-client-ca@1772097151: notBefore=2026-02-26 09:12:31 +0000 UTC notAfter=2027-02-27 03:13:09 +0000 UTC”

time=“2026-02-27T03:13:09Z” level=info msg=“certificate CN=system:k3s-controller signed by CN=k3s-client-ca@1772097151: notBefore=2026-02-26 09:12:31 +0000 UTC notAfter=2027-02-27 03:13:09 +0000 UTC”

time=“2026-02-27T03:13:09Z” level=info msg=“Module overlay was already loaded”

time=“2026-02-27T03:13:09Z” level=info msg=“Module nf_conntrack was already loaded”

time=“2026-02-27T03:13:09Z” level=info msg=“Module br_netfilter was already loaded”

time=“2026-02-27T03:13:09Z” level=info msg=“Module iptable_nat was already loaded”

time=“2026-02-27T03:13:09Z” level=info msg=“Module iptable_filter was already loaded”

time=“2026-02-27T03:13:09Z” level=warning msg=“Failed to load kernel module nft-expr-counter with modprobe”

time=“2026-02-27T03:13:09Z” level=warning msg=“Failed to load kernel module nfnetlink-subsys-11 with modprobe”

time=“2026-02-27T03:13:09Z” level=warning msg=“Failed to load kernel module nft-chain-2-nat with modprobe”

time=“2026-02-27T03:13:09Z” level=info msg=“Set sysctl ‘net/netfilter/nf_conntrack_tcp_timeout_established’ to 86400”

time=“2026-02-27T03:13:09Z” level=info msg=“Set sysctl ‘net/netfilter/nf_conntrack_tcp_timeout_close_wait’ to 3600”

time=“2026-02-27T03:13:09Z” level=info msg=“Logging containerd to /var/lib/rancher/k3s/agent/containerd/containerd.log”

time=“2026-02-27T03:13:09Z” level=info msg=“Running containerd -c /var/lib/rancher/k3s/agent/etc/containerd/config.toml”

time=“2026-02-27T03:13:09Z” level=info msg=“Polling for API server readiness: GET /readyz failed: the server is currently unable to handle the request”

time=“2026-02-27T03:13:10Z” level=info msg=“Waiting for containerd startup: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial unix /run/k3s/containerd/containerd.sock: connect: connection refused"”

time=“2026-02-27T03:13:11Z” level=info msg=“Waiting for containerd startup: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial unix /run/k3s/containerd/containerd.sock: connect: connection refused"”

time=“2026-02-27T03:13:12Z” level=info msg=“containerd is now running”

time=“2026-02-27T03:13:12Z” level=info msg=“Importing images from /var/lib/rancher/k3s/agent/images/k3s-airgap-images.tar”

time=“2026-02-27T03:13:14Z” level=info msg=“Connecting to proxy” url=“wss://127.0.0.1:6443/v1-k3s/connect”

time=“2026-02-27T03:13:14Z” level=info msg=“Creating k3s-cert-monitor event broadcaster”

time=“2026-02-27T03:13:14Z” level=info msg=“Running kubelet --cloud-provider=external --config-dir=/var/lib/rancher/k3s/agent/etc/kubelet.conf.d --containerd=/run/k3s/containerd/containerd.sock --hostname-override=local-node --kubeconfig=/var/lib/rancher/k3s/agent/kubelet.kubeconfig --node-ip=10.88.0.39 --node-labels= --read-only-port=0 --runtime-cgroups=/k3s”

time=“2026-02-27T03:13:14Z” level=info msg=“Handling backend connection request [local-node]”

time=“2026-02-27T03:13:14Z” level=info msg=“Connected to proxy” url=“wss://127.0.0.1:6443/v1-k3s/connect”

time=“2026-02-27T03:13:14Z” level=info msg=“Remotedialer connected to proxy” url=“wss://127.0.0.1:6443/v1-k3s/connect”

time=“2026-02-27T03:13:14Z” level=info msg=“Starting etcd for existing cluster member”

time=“2026-02-27T03:13:14Z” level=info msg=start

time=“2026-02-27T03:13:14Z” level=info msg=“schedule, now=2026-02-27T03:13:14Z, entry=1, next=2026-02-27T12:00:00Z”

time=“2026-02-27T03:13:14Z” level=info msg=“Failed to test etcd connection: failed to get etcd status: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial tcp 127.0.0.1:2379: connect: connection refused"”

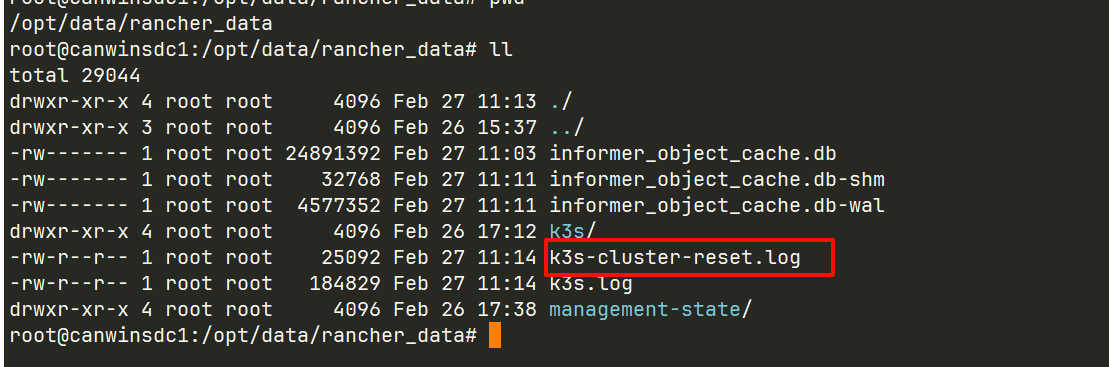

这是崩溃前的日志,大佬请看