环境信息:

RKE2 版本: v1.34.4+rke2r1

节点 CPU 架构,操作系统和版本:

Linux xxx 6.8.0-101-generic #101-Ubuntu SMP PREEMPT_DYNAMIC Mon Feb 9 10:15:05 UTC 2026 x86_64 x86_64 x86_64 GNU/Linux

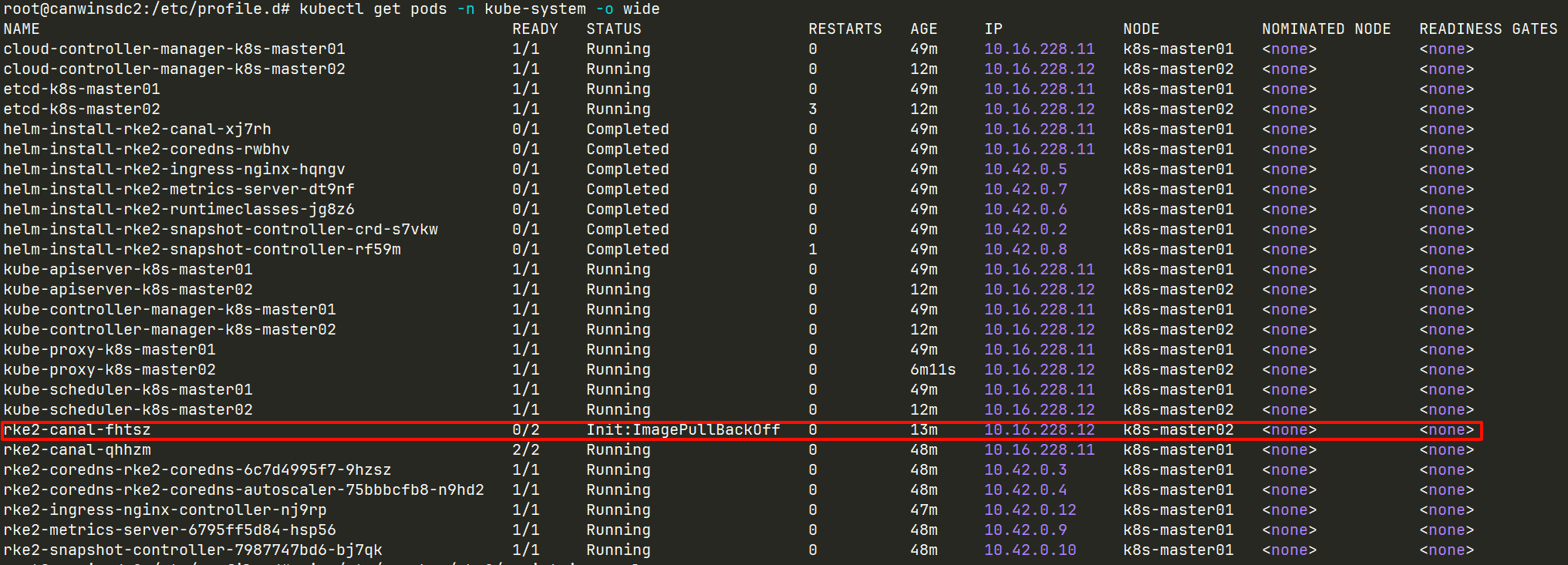

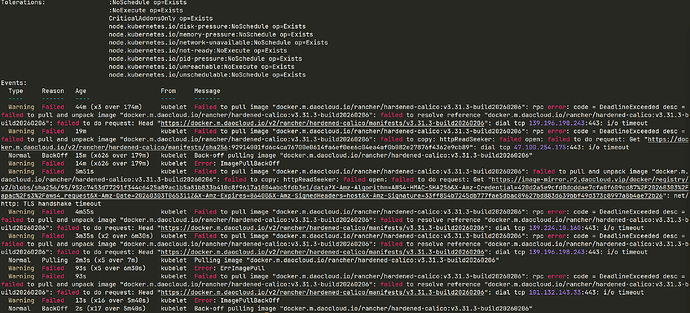

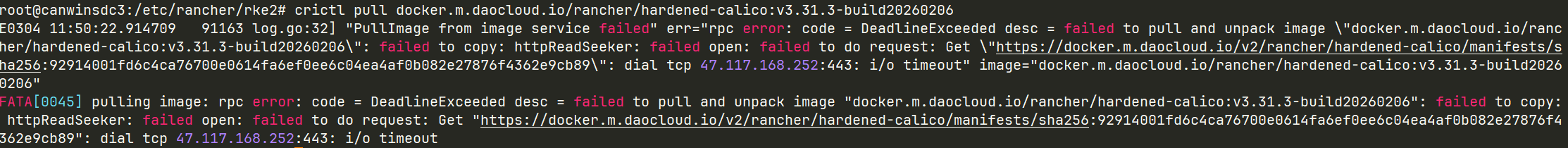

问题描述:

安装并启动后,提示镜像不存在以及etcd不存在

重现步骤:

- 安装 RKE2 的命令:

vim /etc/rancher/rke2/config.yaml

token: canwin-sdc

node-name: k8s-master01

tls-san: 10.xxx.xxx.xx

system-default-registry: “docker.m.daocloud.io”

kube-proxy-arg:- proxy-mode=ipvs

- ipvs-strict-arp=true

curl -sfL https://rancher-mirror.rancher.cn/rke2/install.sh | INSTALL_RKE2_MIRROR=cn sh -

systemctl enable --now rke2-server

实际结果:

journalctl -xeu rke2-server --no-pager

日志

Mar 03 10:08:59 canwinsdc1 systemd[1]: Starting rke2-server.service - Rancher Kubernetes Engine v2 (server)…

░░ Subject: A start job for unit rke2-server.service has begun execution

░░ Defined-By: systemd

░░ Support: Enterprise open source support | Ubuntu

░░

░░ A start job for unit rke2-server.service has begun execution.

░░

░░ The job identifier is 6470255.

Mar 03 10:08:59 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:08:59+08:00” level=warning msg=“not running in CIS mode”

Mar 03 10:08:59 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:08:59+08:00” level=info msg=“Applying Pod Security Admission Configuration”

Mar 03 10:08:59 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:08:59+08:00” level=info msg=“Starting rke2 v1.34.4+rke2r1 (c6b97dc03cefec17e8454a6f45b29f4e3d0a81d6)”

Mar 03 10:08:59 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:08:59+08:00” level=info msg=“Managed etcd cluster initializing”

Mar 03 10:09:01 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:01+08:00” level=info msg=“Password verified locally for node canwinsdc1”

Mar 03 10:09:01 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:01+08:00” level=info msg=“certificate CN=canwinsdc1 signed by CN=rke2-server-ca@1772502570: notBefore=2026-03-03 01:49:30 +0000 UTC notAfter=2027-03-03 02:09:01 +0000 UTC”

Mar 03 10:09:01 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:01+08:00” level=info msg=“certificate CN=system:node:canwinsdc1,O=system:nodes signed by CN=rke2-client-ca@1772502570: notBefore=2026-03-03 01:49:30 +0000 UTC notAfter=2027-03-03 02:09:01 +0000 UTC”

Mar 03 10:09:01 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:01+08:00” level=info msg=“certificate CN=system:kube-proxy signed by CN=rke2-client-ca@1772502570: notBefore=2026-03-03 01:49:30 +0000 UTC notAfter=2027-03-03 02:09:01 +0000 UTC”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“certificate CN=system:rke2-controller signed by CN=rke2-client-ca@1772502570: notBefore=2026-03-03 01:49:30 +0000 UTC notAfter=2027-03-03 02:09:02 +0000 UTC”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Module overlay was already loaded”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Module nf_conntrack was already loaded”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Module br_netfilter was already loaded”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Module iptable_nat was already loaded”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Module iptable_filter was already loaded”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=warning msg=“Failed to load kernel module nft-expr-counter with modprobe”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Removed kube-proxy static pod manifest”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Checking local image archives in /var/lib/rancher/rke2/agent/images for index.docker.io/rancher/rke2-runtime:v1.34.4-rke2r1”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=warning msg=“Failed to load runtime image index.docker.io/rancher/rke2-runtime:v1.34.4-rke2r1 from tarball: no local image available for index.docker.io/rancher/rke2-runtime:v1.34.4-rke2r1: not found in any file in /var/lib/rancher/rke2/agent/images: image not found”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Checking local image archives in /var/lib/rancher/rke2/agent/images for index.docker.io/rancher/rke2-runtime:v1.34.4-rke2r1”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Found crun container runtime at /usr/bin/crun”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=warning msg=“Failed to load runtime image index.docker.io/rancher/rke2-runtime:v1.34.4-rke2r1 from tarball: no local image available for index.docker.io/rancher/rke2-runtime:v1.34.4-rke2r1: not found in any file in /var/lib/rancher/rke2/agent/images: image not found”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Pulling runtime image index.docker.io/rancher/rke2-runtime:v1.34.4-rke2r1”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Waiting for cri connection: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial unix /run/k3s/containerd/containerd.sock: connect: no such file or directory"”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Connecting to proxy” url=“wss://127.0.0.1:9345/v1-rke2/connect”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Creating rke2-cert-monitor event broadcaster”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Starting etcd for new cluster, cluster-reset=false”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=start

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“schedule, now=2026-03-03T10:09:02+08:00, entry=1, next=2026-03-03T12:00:00+08:00”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Failed to test etcd connection: failed to get etcd status: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial tcp 127.0.0.1:2379: connect: connection refused"”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Running kube-apiserver --advertise-port=6443 --allow-privileged=true --anonymous-auth=false --api-audiences=https://kubernetes.default.svc.cluster.local,rke2 --authorization-mode=Node,RBAC --bind-address=0.0.0.0 --cert-dir=/var/lib/rancher/rke2/server/tls/temporary-certs --client-ca-file=/var/lib/rancher/rke2/server/tls/client-ca.crt --egress-selector-config-file=/var/lib/rancher/rke2/server/etc/egress-selector-config.yaml --enable-admission-plugins=NodeRestriction --enable-aggregator-routing=true --enable-bootstrap-token-auth=true --encryption-provider-config=/var/lib/rancher/rke2/server/cred/encryption-config.json --encryption-provider-config-automatic-reload=true --etcd-cafile=/var/lib/rancher/rke2/server/tls/etcd/server-ca.crt --etcd-certfile=/var/lib/rancher/rke2/server/tls/etcd/client.crt --etcd-keyfile=/var/lib/rancher/rke2/server/tls/etcd/client.key --etcd-servers=https://127.0.0.1:2379 --kubelet-certificate-authority=/var/lib/rancher/rke2/server/tls/server-ca.crt --kubelet-client-certificate=/var/lib/rancher/rke2/server/tls/client-kube-apiserver.crt --kubelet-client-key=/var/lib/rancher/rke2/server/tls/client-kube-apiserver.key --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname --profiling=false --proxy-client-cert-file=/var/lib/rancher/rke2/server/tls/client-auth-proxy.crt --proxy-client-key-file=/var/lib/rancher/rke2/server/tls/client-auth-proxy.key --requestheader-allowed-names=system:auth-proxy --requestheader-client-ca-file=/var/lib/rancher/rke2/server/tls/request-header-ca.crt --requestheader-extra-headers-prefix=X-Remote-Extra- --requestheader-group-headers=X-Remote-Group --requestheader-username-headers=X-Remote-User --secure-port=6443 --service-account-issuer=https://kubernetes.default.svc.cluster.local --service-account-key-file=/var/lib/rancher/rke2/server/tls/service.key --service-account-signing-key-file=/var/lib/rancher/rke2/server/tls/service.current.key --service-cluster-ip-range=10.43.0.0/16 --service-node-port-range=30000-32767 --storage-backend=etcd3 --tls-cert-file=/var/lib/rancher/rke2/server/tls/serving-kube-apiserver.crt --tls-cipher-suites=TLS_ECDHE_ECDSA_WITH_AES_256_GCM_SHA384,TLS_ECDHE_RSA_WITH_AES_256_GCM_SHA384,TLS_ECDHE_ECDSA_WITH_AES_128_GCM_SHA256,TLS_ECDHE_RSA_WITH_AES_128_GCM_SHA256,TLS_ECDHE_ECDSA_WITH_CHACHA20_POLY1305,TLS_ECDHE_RSA_WITH_CHACHA20_POLY1305 --tls-private-key-file=/var/lib/rancher/rke2/server/tls/serving-kube-apiserver.key”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Removed kube-apiserver static pod manifest”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Running kube-scheduler --authentication-kubeconfig=/var/lib/rancher/rke2/server/cred/scheduler.kubeconfig --authorization-kubeconfig=/var/lib/rancher/rke2/server/cred/scheduler.kubeconfig --bind-address=127.0.0.1 --kubeconfig=/var/lib/rancher/rke2/server/cred/scheduler.kubeconfig --profiling=false --secure-port=10259 --tls-cert-file=/var/lib/rancher/rke2/server/tls/kube-scheduler/kube-scheduler.crt --tls-private-key-file=/var/lib/rancher/rke2/server/tls/kube-scheduler/kube-scheduler.key”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Running kube-controller-manager --allocate-node-cidrs=true --authentication-kubeconfig=/var/lib/rancher/rke2/server/cred/controller.kubeconfig --authorization-kubeconfig=/var/lib/rancher/rke2/server/cred/controller.kubeconfig --bind-address=127.0.0.1 --cluster-cidr=10.42.0.0/16 --cluster-signing-kube-apiserver-client-cert-file=/var/lib/rancher/rke2/server/tls/client-ca.nochain.crt --cluster-signing-kube-apiserver-client-key-file=/var/lib/rancher/rke2/server/tls/client-ca.key --cluster-signing-kubelet-client-cert-file=/var/lib/rancher/rke2/server/tls/client-ca.nochain.crt --cluster-signing-kubelet-client-key-file=/var/lib/rancher/rke2/server/tls/client-ca.key --cluster-signing-kubelet-serving-cert-file=/var/lib/rancher/rke2/server/tls/server-ca.nochain.crt --cluster-signing-kubelet-serving-key-file=/var/lib/rancher/rke2/server/tls/server-ca.key --cluster-signing-legacy-unknown-cert-file=/var/lib/rancher/rke2/server/tls/server-ca.nochain.crt --cluster-signing-legacy-unknown-key-file=/var/lib/rancher/rke2/server/tls/server-ca.key --configure-cloud-routes=false --controllers=,tokencleaner,-service,-route,-cloud-node-lifecycle --kubeconfig=/var/lib/rancher/rke2/server/cred/controller.kubeconfig --profiling=false --root-ca-file=/var/lib/rancher/rke2/server/tls/server-ca.crt --secure-port=10257 --service-account-private-key-file=/var/lib/rancher/rke2/server/tls/service.current.key --service-cluster-ip-range=10.43.0.0/16 --tls-cert-file=/var/lib/rancher/rke2/server/tls/kube-controller-manager/kube-controller-manager.crt --tls-private-key-file=/var/lib/rancher/rke2/server/tls/kube-controller-manager/kube-controller-manager.key --use-service-account-credentials=true"

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg="Running cloud-controller-manager --allocate-node-cidrs=true --authentication-kubeconfig=/var/lib/rancher/rke2/server/cred/cloud-controller.kubeconfig --authorization-kubeconfig=/var/lib/rancher/rke2/server/cred/cloud-controller.kubeconfig --bind-address=127.0.0.1 --cloud-config=/var/lib/rancher/rke2/server/etc/cloud-config.yaml --cloud-provider=rke2 --cluster-cidr=10.42.0.0/16 --configure-cloud-routes=false --controllers=,-route,-service --kubeconfig=/var/lib/rancher/rke2/server/cred/cloud-controller.kubeconfig --leader-elect-resource-name=rke2-cloud-controller-manager --node-status-update-frequency=1m0s --profiling=false”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Server node token is available at /var/lib/rancher/rke2/server/token”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Handling backend connection request [canwinsdc1]”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Connected to proxy” url=“wss://127.0.0.1:9345/v1-rke2/connect”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Remotedialer connected to proxy” url=“wss://127.0.0.1:9345/v1-rke2/connect”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“To join server node to cluster: rke2 server -s https://10.16.228.11:9345 -t {SERVER_NODE_TOKEN}"

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time="2026-03-03T10:09:02+08:00" level=info msg="Agent node token is available at /var/lib/rancher/rke2/server/agent-token"

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time="2026-03-03T10:09:02+08:00" level=info msg="To join agent node to cluster: rke2 agent -s https://10.16.228.11:9345 -t {AGENT_NODE_TOKEN}”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Wrote kubeconfig /etc/rancher/rke2/rke2.yaml”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Run: rke2 kubectl”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=error msg=“Sending HTTP/1.1 503 response to 127.0.0.1:54312: runtime core not ready”

Mar 03 10:09:02 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:02+08:00” level=info msg=“Running kube-proxy --cluster-cidr=10.42.0.0/16 --conntrack-max-per-core=0 --conntrack-tcp-timeout-close-wait=0s --conntrack-tcp-timeout-established=0s --healthz-bind-address=127.0.0.1 --hostname-override=canwinsdc1 --kubeconfig=/var/lib/rancher/rke2/agent/kubeproxy.kubeconfig --proxy-mode=iptables”

Mar 03 10:09:07 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:07+08:00” level=info msg=“Failed to test etcd connection: failed to get etcd status: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial tcp 127.0.0.1:2379: connect: connection refused"”

Mar 03 10:09:12 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:12+08:00” level=info msg=“Failed to test etcd connection: failed to get etcd status: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial tcp 127.0.0.1:2379: connect: connection refused"”

Mar 03 10:09:17 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:17+08:00” level=info msg=“Failed to test etcd connection: failed to get etcd status: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial tcp 127.0.0.1:2379: connect: connection refused"”

Mar 03 10:09:22 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:22+08:00” level=info msg=“Waiting for cri connection: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial unix /run/k3s/containerd/containerd.sock: connect: no such file or directory"”

Mar 03 10:09:22 canwinsdc1 rke2[1007358]: time=“2026-03-03T10:09:22+08:00” level=info msg=“Failed to test etcd connection: failed to get etcd status: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial tcp 127.0.0.1:2379: connect: connection refused"”